Prism: AI for Idea Clarification

Turning vague ideas into clear, structured specs before AI execution.

Table of Contents

In my last post, I wrote about Helm as a decision layer.

At the time, I was interested in a specific question: if AI keeps getting better at execution, what remains as the human role? My answer was that the center of gravity moves upward. From doing, to deciding. From operating, to steering.

I still think that is true. But while thinking more about Helm, I kept running into a simpler problem that came earlier.

A surprising amount of work does not fail at decision. It fails before that, when the thing itself is still vague.

That was the starting point for Prism.

Where the idea started

Lately, I have been using AI a lot for building side projects. And I noticed a pattern.

Many ideas begin in a form that feels good enough to start, but is not actually clear enough to build. Sometimes it is one sentence in my notes. Sometimes it is a rough product instinct. Sometimes it is a task that sounds obvious until I try to explain what success looks like.

In the past, this kind of vagueness was inconvenient, but manageable. You would start anyway, then clarify along the way.

But AI changes that dynamic.

AI is good enough to run with almost anything. Give it a directionally correct prompt, and it will produce something coherent. Sometimes something impressive. But coherence is not the same as alignment. The system can move quickly while carrying forward all the ambiguity already present in the original idea.

That became more obvious to me the more I used coding agents.

The problem was often not that the model was weak. The problem was that I was handing it something under-clarified. And once the workflow starts moving, vague intent does not disappear. It gets embedded into the artifacts downstream.

That made me think that there is an important layer before execution, and even before decision: clarification.

Why I decided to build Prism

At first, this was not a grand product idea. It was a workflow frustration.

I kept wanting a place where I could take a rough thought and pressure-test it before handing it over to an AI coding agent. Not a blank document where I had to write a full PRD from scratch, and not a chatbot that would immediately jump into generation. Something in between.

I wanted a system that would slow me down in the right way.

Not by adding friction for its own sake, but by asking the questions I was most likely to skip:

- What exactly am I trying to make?

- Who is this for?

- What constraints actually matter?

- What is still unresolved?

- Is this ready for execution, or only emotionally ready because I want to move on?

That was the core idea behind Prism.

The name came from the metaphor. A prism does not create something new. It separates what was already there.

That felt right for early ideas. Most of them are not empty. They are compressed. Goal, user, scope, assumptions, and instinct are all there, but bundled together too tightly to inspect. What I wanted was a way to separate those components before execution started.

How I built the product

I was especially inspired by two things.

The first was the plan mode of coding agents. I liked the idea that the system should not immediately jump into action, but should spend time shaping the work first.

The second was Ouroboros, an open-source project that takes specification seriously. What I found compelling there was not only the implementation, but the attitude underneath it: ambiguity should be surfaced early, and readiness should be earned rather than assumed.

Prism became my attempt to turn that instinct into a more focused product.

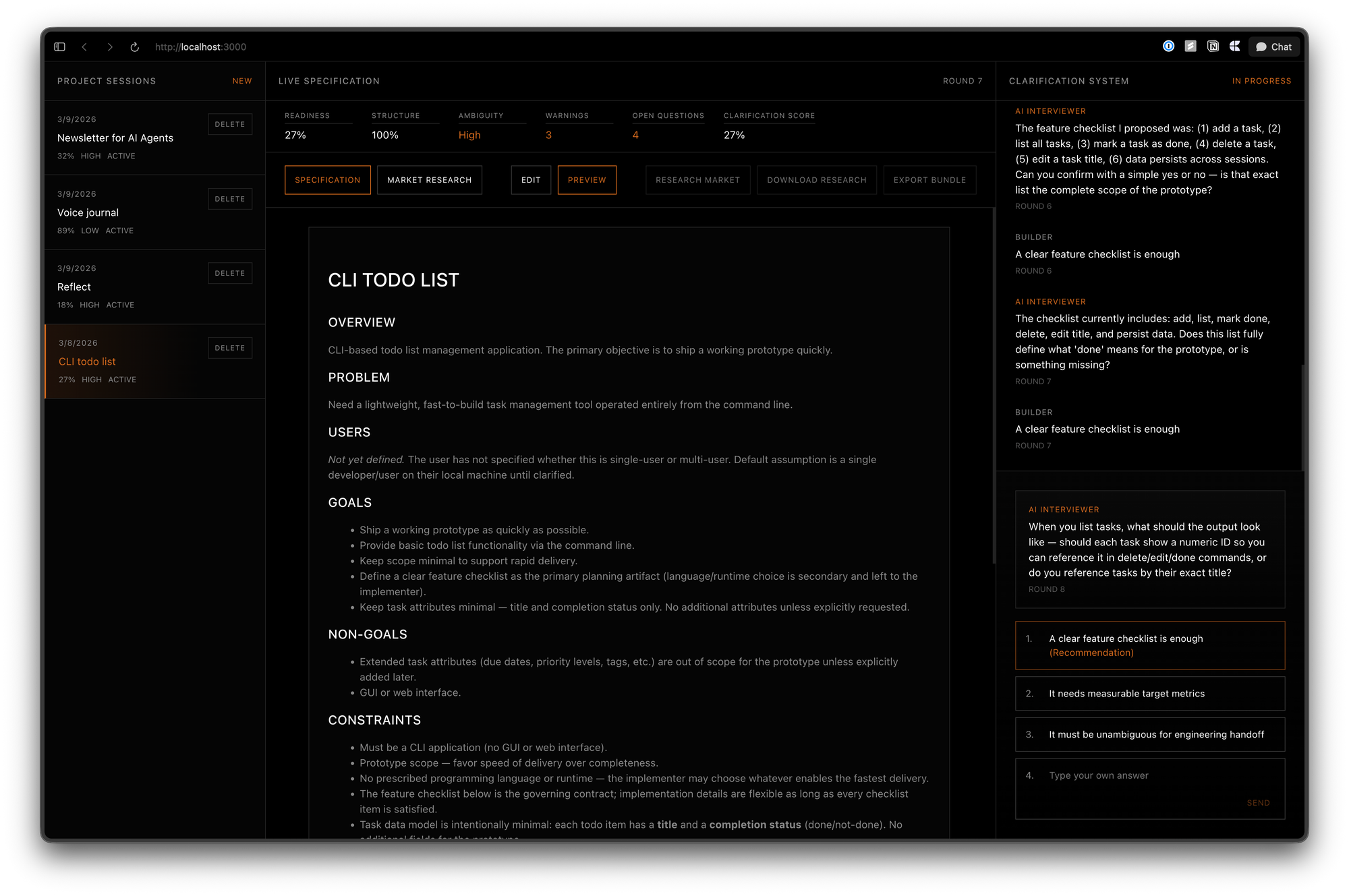

I designed it around a very simple workflow:

- Start with rough title & short idea

- Prism generates an initial draft, then begins asking one question at a time.

- As the conversation continues, the spec updates in the center.

- Prism keeps tracking Readiness Score, Structure, Ambiguity, Warnings, and Open Questions.

- Once readiness score hits over 80%, it can be exported into a bundle that is usable for coding agents.

- Also, you can do market research based on the spec. Using Exa API, it search the web and figure out the market dynamics.

The part that matters most to me is how the system turns conversation into a structured artifact. I did not want the output of clarification to disappear into a conversation log. I wanted it to become a document that could actually be inspected, edited, and handed off downstream. So Prism keeps track of things like goals, target users, constraints, success criteria, open questions, and unresolved assumptions.

What I learned

Building Prism clarified a few things for me:

- Most of the time, AI does not fail by misunderstanding us, but by faithfully acting on intent that was never fully formed to begin with.

- As systems become more automated, the starting condition matters more. In slower workflows, ambiguity could be corrected along the way. But in faster, more agentic workflows, the initial state becomes part of the foundation. A weak beginning is no longer just a temporary inconvenience. It shapes everything that follows.

- Clarification deserves its own interface.

Not everything should collapse into chat. Not everything should jump straight into execution. There is real value in a product surface built specifically for turning vague intent into something legible.

I think this will matter more as AI products become more autonomous and more self-improving. A powerful system can preserve and compound whatever it was given. That means a self-improving workflow cannot reliably outgrow a poorly formed beginning.

Better automation does not remove the need for clarity. It raises the price of not having it.

Why I think this category matters

Prism is still a side project. It is a narrow product. But I think the category behind it is larger than this one tool.

If AI keeps getting better at execution, then more leverage moves upward. Part of that leverage sits in decision-making, which is what I was trying to capture with Helm. But part of it sits even earlier, in making the problem legible enough that downstream systems can work on the right thing.

That is the layer Prism is exploring. A place where rough intent becomes structured enough to trust.

Closing

I started Prism because I kept running into the same problem in my own workflow: I had ideas that were promising enough to pursue, but not clear enough to hand off.

Building it made me more convinced that this is not just a personal productivity issue. It is becoming part of the interface problem of the AI era.

As systems become more capable, the human role may lie less in doing everything manually and more in shaping the beginning well enough that the system can grow in the right direction.

Helm is about steering.

Prism is about making the thing clear enough to steer in the first place.

MJ Kang Newsletter

Join the newsletter to receive the latest updates in your inbox.