Helm: The Decision Layer for Agent-Native Organization

Table of Contents

Over the past year, AI models have improved at a pace that still feels unreal. Capabilities that once required specialized teams are now accessible through a single interface. Models can reason, write code, analyze data, draft strategy documents, and operate tools. More importantly, they can act.

My own relationship with AI shifted when I started using Codex as an agent rather than as a chatbot. Instead of asking for snippets of help, I began delegating real work. I could build personal products at a speed I never thought possible. Ideas that once sat idle because they required too much engineering effort suddenly became shippable in days.

Then tools like OpenClaw and Claude Code extended this further. It wasn’t just about code generation anymore. Agents could coordinate tasks, persist memory, reason across longer contexts, and operate beyond strictly developer workflows. Non-technical tasks, such as research, planning, content creation, and analysis, became structured, delegated processes.

Execution was no longer the bottleneck.

And that led to a more ambitious question:

What if there were a company composed entirely of AI agents?

No human employees.

Just agents.

Strategy, growth, engineering, finance, risk.

All AI.

And you are the CEO.

First Problem: Decisions

When people imagine agent organizations, they often focus on coordination. How do agents communicate? How do they share memory? How do they call tools safely?

These are real problems. But they are not the deepest one.

The deeper problem is decision-making.

Most meaningful choices in business do not have a correct answer. They involve trade-offs.

- Do you prioritize speed or safety?

- Do you optimize for growth or margin?

- Do you launch now or wait for brand alignment?

- Do you accept short-term risk for long-term positioning?

An agent-only company will constantly surface these tensions. Different agents optimize for different objective functions. A growth agent pushes expansion. A finance agent controls burn. A risk agent flags exposure. A strategy agent protects long-term coherence.

All are rational. All are useful.

But rational optimization is not strategy.

Strategy is preference under uncertainty.

As the founder of your own agent-only company, especially in the early stage, you will make an extraordinary number of decisions. Not because agents are incapable, but because there is no universal objective function that captures your taste, your risk appetite, your ambition, or your long-term vision.

Over time, something interesting happens. Agents observe your choices. They internalize patterns. They adapt to your preference structure. There is a compounding effect. The organization begins to anticipate you. Decision velocity increases. Alignment deepens.

But that only works if decisions are made clearly, explicitly, and structurally.

Which leads to the next realization.

The Role of the Human Changes

In an agent-only company, execution becomes cheap.

When execution is cheap, it is no longer your leverage.

Your leverage becomes judgment.

Your thoughts.

Your taste.

Your framing of trade-offs.

Jeff Bezos once said that a senior leader’s job is to make a small number of high-quality decisions each day. If you make three good decisions per day, that is enough. The implication is subtle but powerful: Leadership is not about volume. It is about clarity under uncertainty.

In a world where agents can execute infinitely faster than humans, this becomes even more true. The human is no longer inside the operational loop. The human sits above it.

Not as an operator.

As a decision authority.

But current agent systems do not treat this role as a first-class concept.

Most frameworks, including OpenClaw, are designed around orchestration and execution. They enable agent collaboration, memory persistence, and tool usage. They are powerful engines.

What they do not provide is a structured decision layer.

Interaction still largely happens through chat. You ask, they respond. You refine. They execute.

That is sufficient for task delegation. It is insufficient for governance.

Interestingly, you can see hints of this missing layer in tools like Claude Code or Codex’s plan mode. Before executing, they ask clarifying questions. They surface trade-offs. They force you to commit. Even in small ways, they acknowledge that execution should follow explicit decision.

That pattern deserves to be formalized.

Why Helm Is Necessary

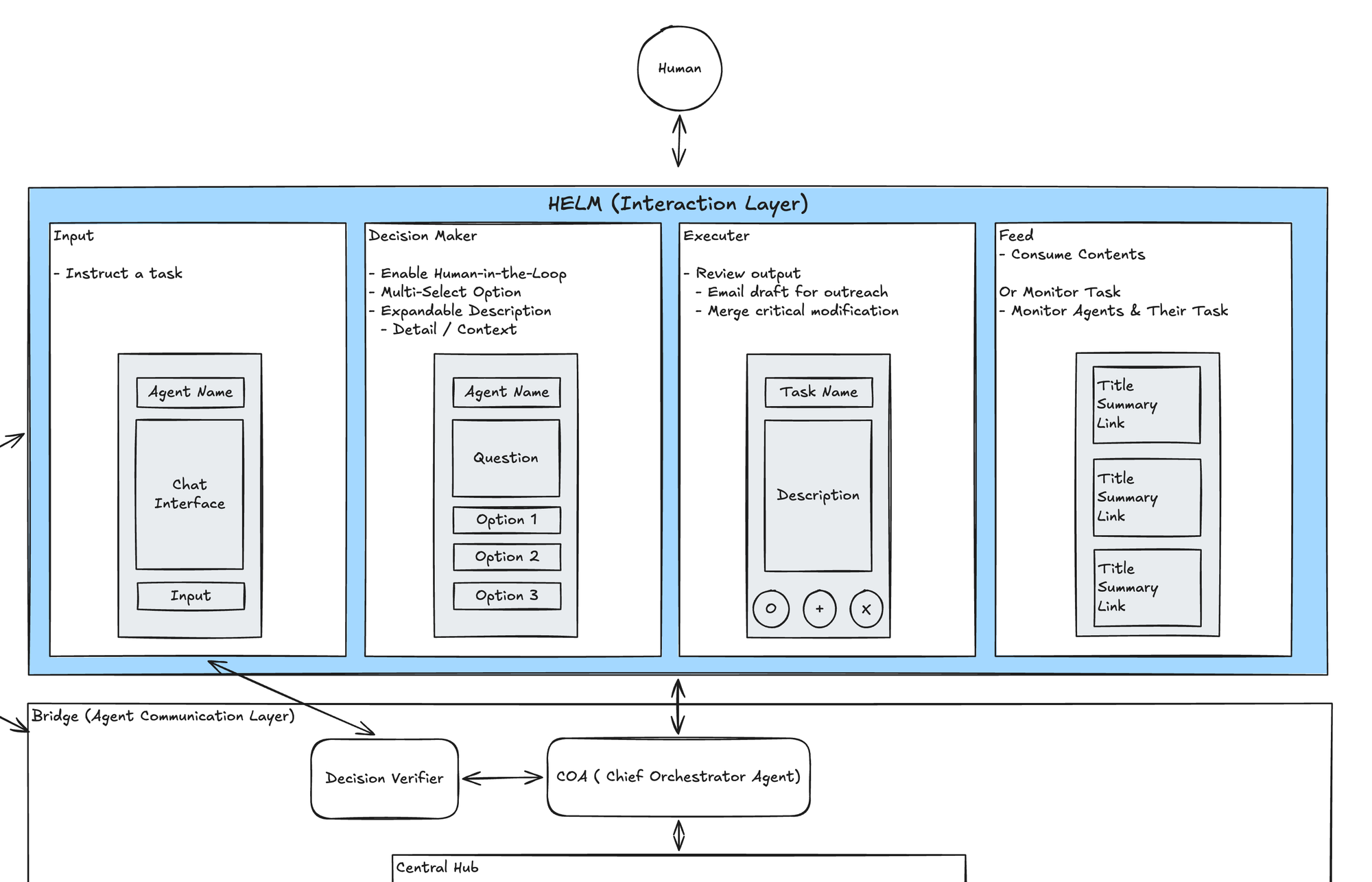

As I mapped the architecture of an agent-only organization, I realized something was missing above the agents themselves. A layer that was not another agent, but an interface for structured decision-making.

I started calling it Helm.

Helm is not a reasoning model. It is not an orchestration engine. It is not a supervisor LLM.

It is an application layer that transforms agent outputs into decision surfaces.

Instead of receiving five disconnected recommendations, you see three strategic paths. Instead of implicit trade-offs, you see explicit ones. Instead of vague uncertainty, you see quantified exposure. Instead of conversational iteration, you see structured commitment.

Helm asks: Which path do you choose?

It records the choice. It propagates that decision downward. It shapes the future behavior of the agents.

In other words, Helm encodes taste.

Without a decision layer, an agent-only company risks becoming a highly efficient but strategically incoherent machine. Agents will optimize locally. But without a place where global trade-offs are resolved explicitly, the organization drifts.

Helm anchors it.

Future Plans

Today, Helm is not a product. It is my personal operating system experiment.

I am exploring what a true decision interface between a human and an agent organization should look like. How should trade-offs be visualized? How should uncertainty be framed? How should commitments be tracked over time so that agents can compound alignment?

The Helm began as curiosity. It now feels like infrastructure.

If agent-native organizations become common (and I believe they will) the limiting factor will not be model capability. It will be governance clarity.

Imagine a world where every individual can spin up their own company composed of hundreds of AI agents. Product, marketing, operations, research, finance—all autonomous.

The barrier to building becomes imagination and the quality of your decisions.

In that world, Helm is not a luxury. It is the steering wheel.

Execution engines will continue to improve. Orchestration frameworks will mature. Agents will become more specialized.

But someone must decide.

Helm is my attempt to design that layer deliberately.

More to come.

MJ Kang Newsletter

Join the newsletter to receive the latest updates in your inbox.